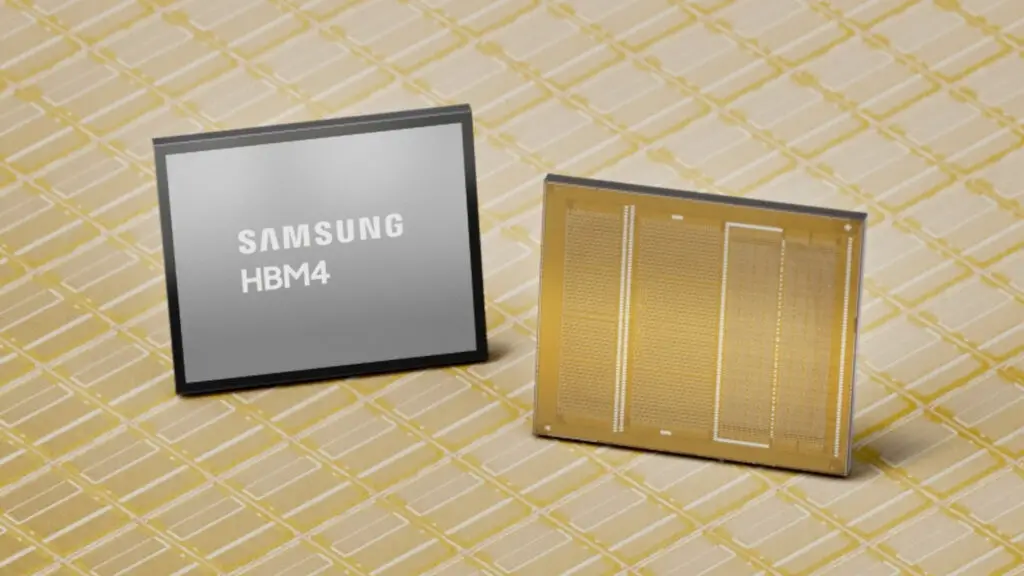

The AI-fuelled memory supply crisis is reshaping the landscape of global technology, creating ripples that extend far beyond the server room. At Digital Tech Explorer, we’ve been tracking how the surge in demand for specialized memory is causing a shift in wafer production priorities. While consumer-grade RAM hasn’t been the primary target, the hunger for High Bandwidth Memory (HBM) in AI datacenters is reaching a fever pitch. Leading the charge is Samsung, which recently unveiled its industry-first commercial HBM4, setting a new benchmark for performance.

The Premium Cost of Innovation: HBM4 Pricing

In our analysis of the current market, Samsung’s latest HBM4 units are commanding an estimated price of $700 per module. This reflects a 20–30% premium over the previous HBM3E generation. This steep climb isn’t just about the tech; it’s a strategic move. As TechTalesLeo, I’ve observed that the profitability of commodity DRAM is actually starting to rival HBM, prompting Samsung to pivot its production strategy.

Rather than flooding the market, Samsung is meticulously managing its HBM capacity. By focusing on profitability over sheer volume, they are insulating themselves against potential market shifts while maintaining their competitive edge. This calculated approach ensures they remain the premier supplier for high-performance hardware without the risks of oversupply.

Market Comparison: HBM Generations

| Memory Generation | Primary Focus | Estimated Price (Per Unit) | Major Adopters |

|---|---|---|---|

| HBM3E | AI Training & Inference | ~$550 – $600 | Google, Meta, Nvidia |

| HBM4 | Next-Gen AI & Supercomputing | ~$700 | Nvidia (Primary) |

Nvidia’s Dominance and the Road to GTC 2026

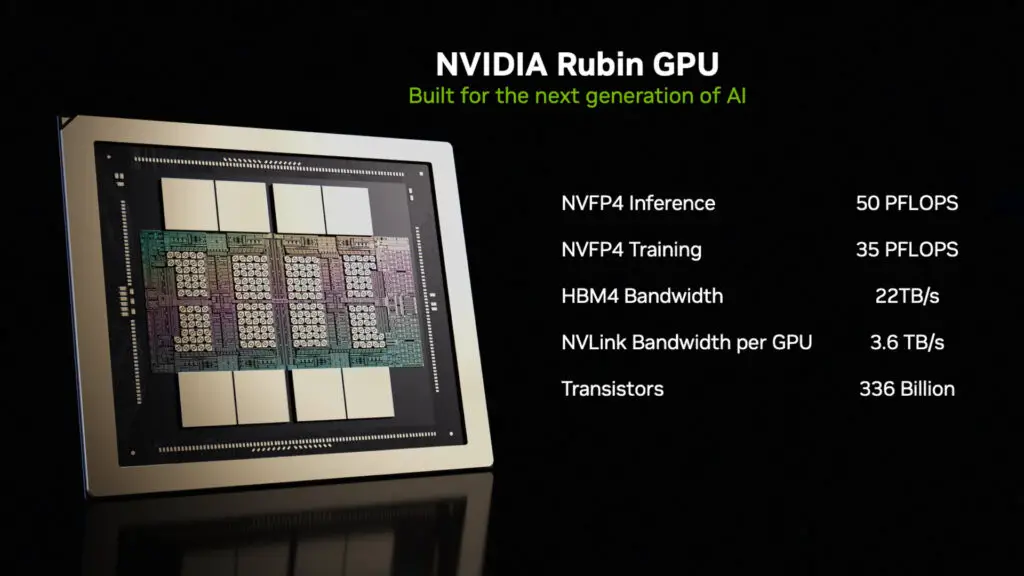

Currently, Nvidia stands as the primary heavyweight securing HBM4 orders for the upcoming year. While other tech giants like Google are still optimizing their AI acceleration efforts using the more mature HBM3E supply, Nvidia is pushing the envelope. We expect to see the fruits of this partnership at the GTC 2026 conference this March.

The star of the show is anticipated to be the Nvidia Vera Rubin, a “superchip” boasting an incredible six trillion transistors. This GPU marvel is rumored to be the first to leverage TSMC’s ultra-advanced A16 chip node. As we prepare for the GTC kickoff in San Jose on March 16, the industry is bracing for a shift in what we consider “standard” for AI performance.

What This Means for the Gaming Community

For those of us in the gaming world, HBM remains an elusive luxury. While AMD previously tinkered with the technology in the Radeon RX Vega series, it never quite transitioned into a mainstream standard for consumer cards. Most modern rigs still rely on GDDR memory, which offers a better balance of price and performance for local rendering.

At Digital Tech Explorer, our mission is to help you navigate these complex shifts. While HBM4 might not be inside your next gaming PC, the innovations it drives in AI and machine learning will eventually trickle down into the software and tools we use every day. Stay tuned as we continue to bring you the stories behind the silicon.