The integrity of benchmarking is under the microscope once again. Primate Labs, the architects behind the widely used Geekbench software, has renewed its critique of Intel’s Binary Optimization Tool (BOT). At Digital Tech Explorer, we prioritize transparency and real-world testing, and the latest findings from Primate Labs suggest a growing divide between synthetic scores and actual user experience. According to the developers, Intel BOT focuses on a narrow selection of applications, potentially creating a performance narrative that doesn’t hold up under typical daily workloads.

Geekbench Flags Intel’s Latest Performance Metrics

In a move to protect the accuracy of its database, Primate Labs recently announced that Geekbench results generated using Intel’s new Arrow Lake Plus CPUs will be flagged. This decision follows rigorous internal testing which revealed that the BOT software’s influence extends further than previously thought. Interestingly, these tests were conducted on a Panther Lake laptop, uncovering that Intel’s upcoming mobile architecture also utilizes BOT—a detail Intel had not previously highlighted during its product showcases.

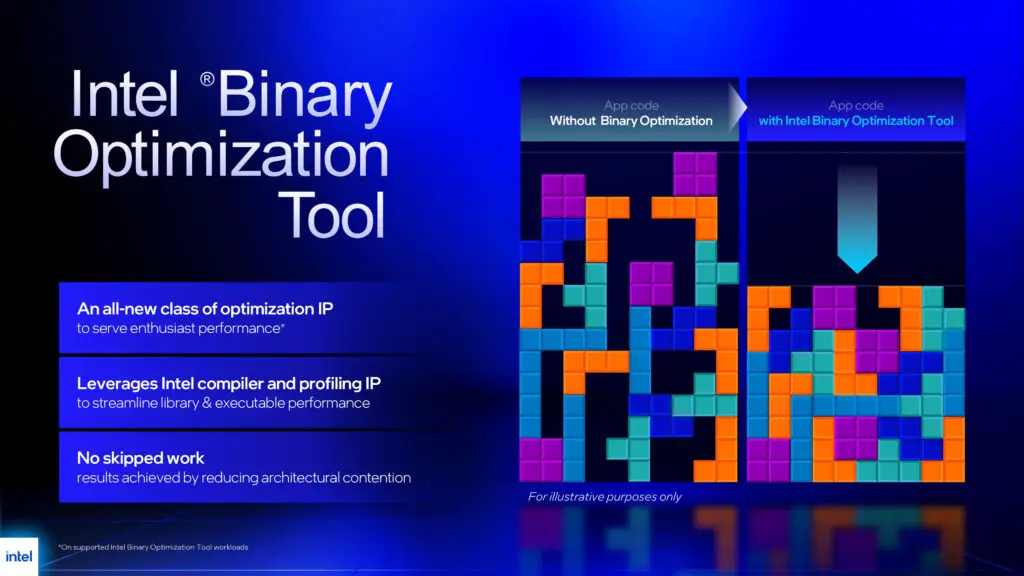

The Technical Deep Dive: Beyond Code Reordering

TechTalesLeo here, and as someone who loves uncovering the narrative behind the silicon, the mechanics of BOT are particularly intriguing. While Intel initially described the tool as a relatively simple code-reordering mechanism, Primate Labs’ investigation suggests something far more sophisticated. Their analysis found that BOT heavily vectorizes specific parts of the code. In the HDR workload test, this resulted in a 14% reduction in total instructions. By converting scalar instructions (single value) into vector instructions (eight values at once), the software effectively “shortcuts” the traditional processing path to boost scores.

However, this optimization comes with a hidden cost: latency. During the initial execution of Geekbench 6.3 with BOT enabled, researchers observed a staggering 40-second delay at startup. While subsequent runs dropped to a 2-second delay, the initial overhead is a stark contrast to the seamless experience developers and enthusiasts expect from modern hardware.

Comparison of Performance Metrics: Standard vs. BOT Optimized

| Metric | Standard Execution | Intel BOT Optimized |

|---|---|---|

| HDR Workload Instructions | 100% (Baseline) | 86% (14% Reduction) |

| Initial App Startup Delay | Near Instant | 40 Seconds |

| Subsequent Startup Delay | None | 2 Seconds |

| Instruction Method | Standard Scalar | Aggressive Vectorization |

What This Means for the Tech Community

The core of the issue lies in comparability. If a benchmark result is only achievable through highly specific, hand-tuned optimizations that don’t apply to 99% of other software, is it still a valid measure of a CPU’s power? These findings suggest that Intel may be creating an inflated perception of performance when compared to competitors like AMD.

At Digital Tech Explorer, we believe that whether you are a software engineer or a gaming enthusiast, you deserve metrics that reflect real-world usage. As BOT requires specific intervention from Intel Labs for every supported application, it is unlikely to provide a universal performance uplift. For now, the tech community is advised to view BOT-enhanced benchmark scores with a healthy dose of skepticism.

Disclaimer: All content on Digital Tech Explorer is for informational and entertainment purposes only. Some of the links included are affiliate links, meaning we may earn a commission at no additional cost to you.