In the ever-evolving landscape of digital innovation, AI is forcing us to confront and clarify fundamental concepts that have long governed the tech world. What does it truly mean to write coding scripts? Where is the boundary between creative inspiration and blatant copying? At Digital Tech Explorer, we closely monitor these shifts, and a recent demonstration by software researchers has uncovered a startling reality: it is now technically possible—and seemingly legal—to use AI to scrape and reforge entire open-source software suites into proprietary products.

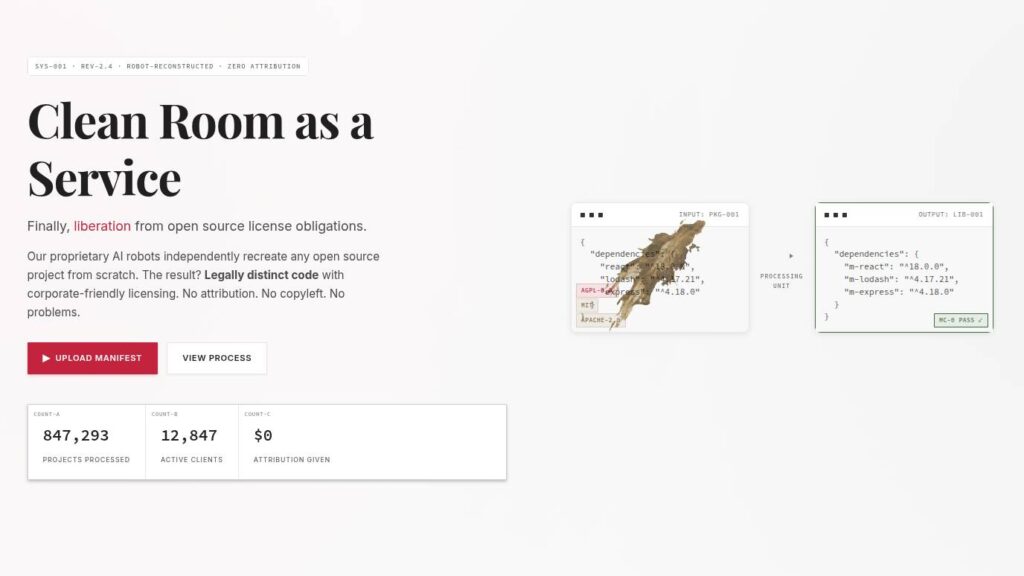

In a compelling presentation, Dylan Ayrey (founder of Truffle Security) and Mike Nolan (software architect at the UN Development Program) revealed how their malus.sh AI service can recreate almost any open-source project. The result is “legally distinct code with corporate-friendly licensing.” This means no attribution, no copyleft, and theoretically, no legal repercussions for the companies involved.

The History of Copyright and Clean-Room Design

To understand how this works, we must look at the history of copyright. Since the landmark US Supreme Court case Baker v Selden, copyright has protected the expression of an idea rather than the idea itself. This distinction gave birth to clean-room design—a method of copying a product’s functionality without copying its specific source code, thereby avoiding infringement.

The traditional process involves two separate teams: one to analyze the original software and write a detailed specification, and another to write new code based strictly on those specs without ever seeing the original. Historically, Phoenix Technologies famously used this method to create a legal clone of the IBM PC BIOS, changing the hardware industry forever.

AI Acceleration: The New Frontier of Cloning

What makes the current era different is AI acceleration. Ayrey and Nolan demonstrate that AI can perform these complex tasks orders of magnitude faster than a human team. While traditional clean-room design required significant manual labor, AI bots can now generate “legally distinct” code in minutes.

| Feature | Traditional Clean-Room Design | AI-Powered (Malus.sh) |

|---|---|---|

| Speed | Months to Years | Minutes to Hours |

| Cost | High (Requires two separate dev teams) | Low (AI subscription/API fees) |

| Human Labor | Extensive manual coding | Prompt engineering and oversight |

| Legal Barrier | Strong (if protocols are strictly followed) | Grey Area (AI training data concerns) |

As TechTalesLeo, I find this shift fascinating yet troubling. If an AI model was trained on the very code it is now tasked to “re-spec,” can we truly say it is a clean-room environment? The lines between the original training data and the new output are increasingly blurred.

Why Companies Might Ditch Open Source

There is a practical argument for why a company might want to clone open-source software and make it proprietary. Relying on community-driven code can expose businesses to significant vulnerabilities. For instance, the Log4j framework vulnerability, known as Log4Shell, forced companies globally to scramble for fixes. Even the Minecraft Java Edition required immediate patching to protect players.

When software is open-source, the burden of maintenance often falls on a few volunteers who may not have the resources to provide immediate enterprise-grade security patches. By turning these projects into proprietary software, companies gain control over the development cycle and licensing costs, though they do so at the expense of the open-source spirit.

A Pertinent Warning: The “Revision of Belief”

The Malus project serves as a wake-up call. During their presentation, the researchers used an AI-generated video to share a sobering analogy about a turkey:

Consider a turkey that is fed every day. Every single feeding will firm up the bird’s belief that it is the general rule of life to be fed every day by friendly members of the human race… On the afternoon of the Wednesday before Thanksgiving, something unexpected will happen to the turkey. It will incur a revision of belief.

The “slaughter” in this context is the potential death of open-source as we know it. If every valuable community project can be instantly cloned and monetized by a corporation using AI, the incentive to share code freely may vanish. At Digital Tech Explorer, we believe in the power of transparency and education, but the rise of AI cloning suggests we are approaching a “revision of belief” that could reshape the software industry for decades.

The question remains: How will copyright evolve to protect creators in the age of AI, or is the era of open-source collaboration nearing its end? As the researchers poignantly asked: “How are you going to stop me?”

For more insights into digital innovation and tech storytelling, stay tuned to TechTalesLeo and the latest updates here at Digital Tech Explorer.