At Digital Tech Explorer, we’ve followed Nvidia’s journey for years, but this year’s GTC event revealed a fascinating shift in the landscape of digital innovation. While much of the world focuses on how AI can generate art or text, Nvidia is looking inward. Our lead storyteller, TechTalesLeo, dives into how the company is using its own hardware and specialized AI agents to design the next generation of GPU architecture.

During a deep-dive session titled “Advancing to AI’s Next Frontier,” Bill Dally, Nvidia’s chief scientist, chatted with Google’s Jeff Dean about the behind-the-scenes mechanics of chip design. The revelation was clear: Nvidia is no longer just building AI; they are being built by AI.

AI Streamlines Chip Design with NVCell

One of the most labor-intensive parts of semiconductor manufacturing is porting a standard cell library to a new process node. A cell library is essentially a blueprint of logic gates and interconnections used to build complex components like texture units. Traditionally, this was a monumental human effort.

“Every time we have a new semiconductor process, we have to port our standard cell library of about 2,500 to 3,000 cells,” Dally explained. “That used to take a team of eight people about 10 months.”

Enter NVCell, a reinforcement learning-based program that has revolutionized this workflow. The efficiency gains, as shown in the table below, are staggering:

| Metric | Human Design Team | NVCell (AI Agent) |

|---|---|---|

| Time Required | 10 Months (80 person-months) | Overnight |

| Resource Usage | 8 Full-time Engineers | Single GPU |

| Design Quality | Standard baseline | Exceeds human size/power efficiency |

By using AI acceleration, Nvidia isn’t just saving time; they are creating designs that dissipate less power and operate with less delay than those created by human engineers.

Accelerating Design Verification and Optimization

The innovation doesn’t stop at cell libraries. Nvidia is also tackling “design verification,” the process of proving a design works before it is sent to TSMC for manufacturing. By using AI to collapse the time spent on verification, Nvidia can move from concept to hardware much faster.

Another breakthrough involves Prefix RL. This applies reinforcement learning to the ancient computer design problem of Carry-lookahead chains—the fundamental math behind digital addition. Dally noted that the AI treats the problem like an Atari video game, finding “totally bizarre” designs that humans would never consider. These AI-generated designs are often 20% to 30% more efficient than traditional human-made layouts.

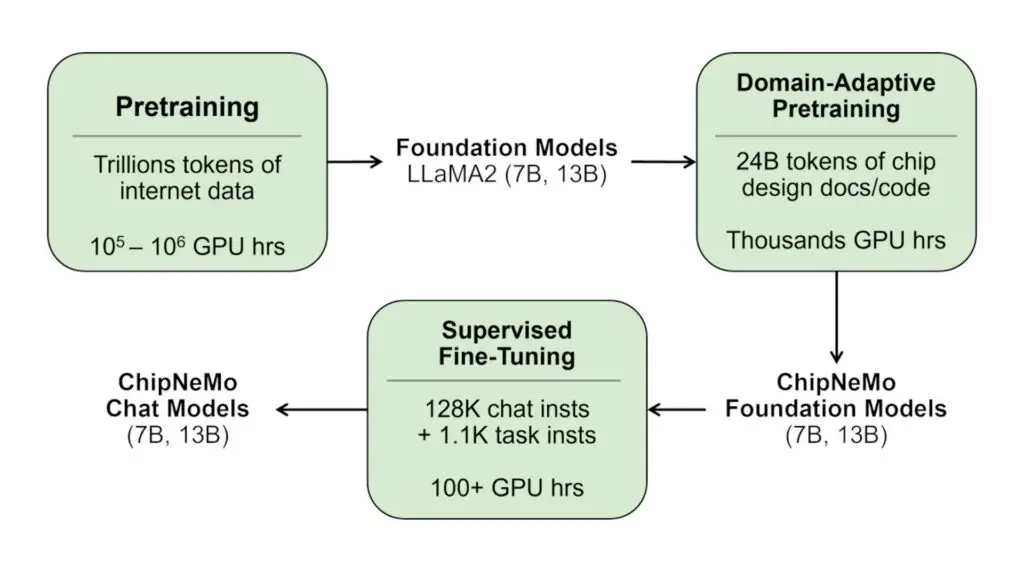

ChipNeMo: The Specialized LLM for Hardware Engineers

While automation handles the physical layout, Nvidia is also using Large Language Models (LLMs) to manage internal knowledge. They developed ChipNeMo, a specialized version of a machine learning model trained specifically on Nvidia’s proprietary design documents and RTL (Register-Transfer Level) code.

This acts as a “GPU-GPT” for internal staff. One of the most practical benefits is for junior designers. Instead of interrupting senior engineers to ask how a specific texture unit functions, they can query ChipNeMo for a detailed, technical explanation based on decades of Nvidia’s internal expertise.

As we continue to explore the intersection of gaming, hardware, and AI at Digital Tech Explorer, it’s clear that the tools used to build our favorite tech are becoming just as advanced as the products themselves. By leveraging AI to design AI, Nvidia is effectively shortening the path to the future of computing.

For more insights into the latest tech trends and coding innovations, visit our author page to see more stories from TechTalesLeo.