The digital landscape in the UK is shifting beneath our feet. Age verification checks are no longer a distant proposal; they are a lived reality under the Online Safety Act. However, as we navigate the fallout from Discord’s recent implementation hurdles, it’s becoming clear that this rollout is fraught with complications that every tech enthusiast and privacy advocate should be watching closely.

At Digital Tech Explorer, we keep a close eye on how legislation intersects with software usability. Currently, accessing simple features like Bluesky direct messages requires an interaction with the Epic Games-owned KWS (Kids Web Services) system. Users are forced to provide a bank card, a government ID, or submit to a facial scan. It isn’t just Bluesky; similar hurdles have materialized on Reddit, Discord, and even Xbox live services.

The UK’s Online Safety Act: A Catalyst for Change

The Online Safety Act, which passed last year, aims to shield minors from “harmful content.” This broad category encompasses everything from pornography to content promoting self-harm or bullying. While the intent is to create a safer internet, the execution has forced platforms to build digital “age-gates.”

Interestingly, while a majority of the British public supports the intent of the law, a significant portion remains skeptical of its efficacy. For many tech-savvy users, the immediate reaction is to reach for a VPN to bypass regional blocks. However, for the average user, these gates represent a permanent change in how they interact with global social platforms.

Summary of Age Verification Impact on Major Platforms

| Platform | Primary Verification Method | Verification Vendor |

|---|---|---|

| Discord | Facial Scan / ID Upload | K-ID / Persona (Experimental) |

| Bluesky | Bank Card / ID / Face Scan | KWS (Epic Games) |

| Self-Declaration / Prompted ID | Internal / Third-party | |

| Xbox | Credit Card / Government ID | Microsoft Internal |

Privacy Risks and the Discord Data Security Incident

The primary concern for the community at Digital Tech Explorer isn’t just the inconvenience; it’s the data. Handing over sensitive documents or biometric data to third-party vendors creates new points of failure. In late 2025, reports surfaced of a Discord security breach that potentially leaked 70,000 age-verification ID photos. While Discord’s primary partner, K-ID, clarified they were not involved in that specific leak, the event highlights the inherent risks of centralized identity databases.

As a storyteller in the tech space, I’ve seen many “temporary” data solutions become permanent vulnerabilities. Refusing these checks isn’t just about stubbornness; it’s a calculated move to minimize one’s digital footprint and prevent sensitive documents from ending up on the dark web.

Discord’s Global Ambitions and the “Persona” Experiment

Discord initially used the UK as a testing ground, but the platform recently announced plans to roll out facial scanning and ID checks globally. This “compliance in advance” strategy suggests that the UK’s legislative framework may soon become the global standard for social media. However, Discord’s choice of partners has raised eyebrows in the developer community.

A recent “experiment” involved Persona, an age-assurance vendor with ties to Peter Thiel (co-founder of Palantir). Although Persona and Palantir operate independently, the connection to high-level surveillance technology has made users uneasy. Furthermore, reports emerged of a Persona frontend being exposed on a US government-authorized server, adding fuel to the privacy fire.

The Nuance of Content Moderation

There is a difficult balance to strike here. Most of us agree that protecting children from harassment and inappropriate content is a noble goal. However, “harmful content” is a dangerously subjective term. In the past, overly broad laws like Section 28 in the UK effectively silenced LGBTQIA+ resources in schools. There is a valid fear that aggressive age-gating will inadvertently block access to vital educational resources regarding art, health, and identity.

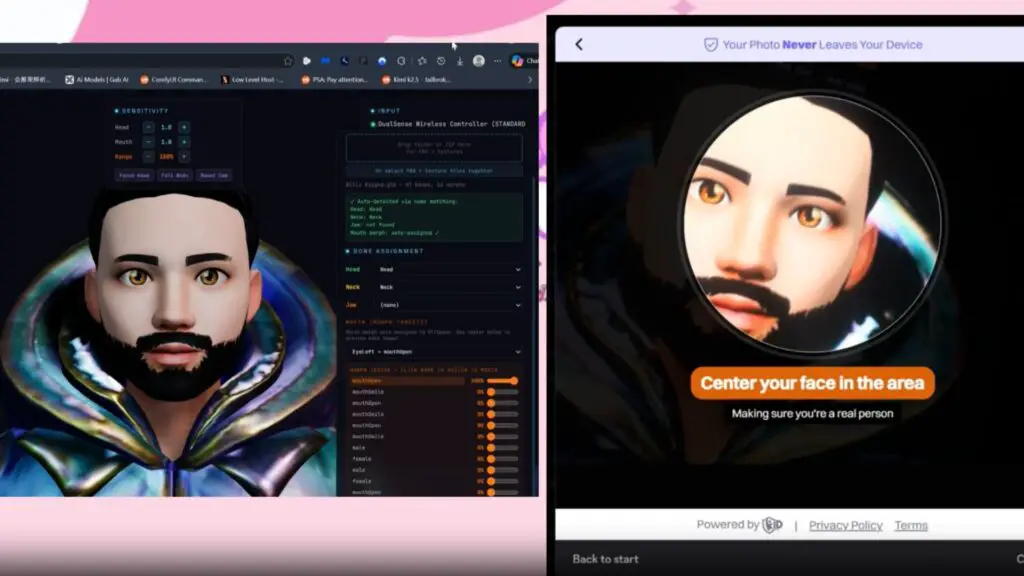

For those looking for workarounds, the tech community is already active. From using 3D models to spoof facial scans to utilizing VPNs, the “cat and mouse” game between users and regulators is in full swing. One notable example involved users successfully using the photo mode in Death Stranding to fool face-scanning software—proving that as long as there is a gate, there will be a developer trying to pick the lock.

Conclusion: The Future of Online Privacy

While some may jump ship to Discord alternatives, the reality is that any platform operating in major markets will eventually face similar regulatory pressure. At Digital Tech Explorer, we believe the solution lies in better technology—perhaps decentralized identity or zero-knowledge proofs—rather than handing over our IDs to every website we visit.

Until these vendors can prove their systems are ironclad and their privacy policies are beyond reproach, the tech community will likely continue to view these “safety” measures with a healthy dose of skepticism. Stay tuned as we continue to track the evolution of the Online Safety Act and its impact on your digital life.