In the rapidly evolving landscape of digital innovation, timing and messaging are everything. Micron, however, appears to be testing the limits of irony with its latest narrative. The company recently published a blog post titled, “The new performance bottleneck: How more GPU memory unlocks next gen gaming and AI PCs.” While the technical insights are sound, the premise feels remarkably detached from the company’s recent strategic pivots.

At Digital Tech Explorer, we closely monitor how industry shifts affect the end-user. It is hard to forget that just last December, Micron announced a “difficult decision” to exit the Crucial consumer business. This move was designed to redirect resources toward “strategic customers” in the high-growth AI data center sector. For the average PC gamer or independent developer, being told that more memory is the key to the future—just as the manufacturer steps away from the consumer market—is a bitter pill to swallow.

The Evolution of GDDR7 and the 96GB Threshold

Micron’s central argument is that the next era of PC performance will be defined by memory scale rather than mere compute power. While this discussion focuses on GDDR7 VRAM for GPUs rather than standard system DDR5 RAM, the scale they are proposing is staggering. Micron is highlighting its latest evolution of GDDR7, featuring a new 24 GB density.

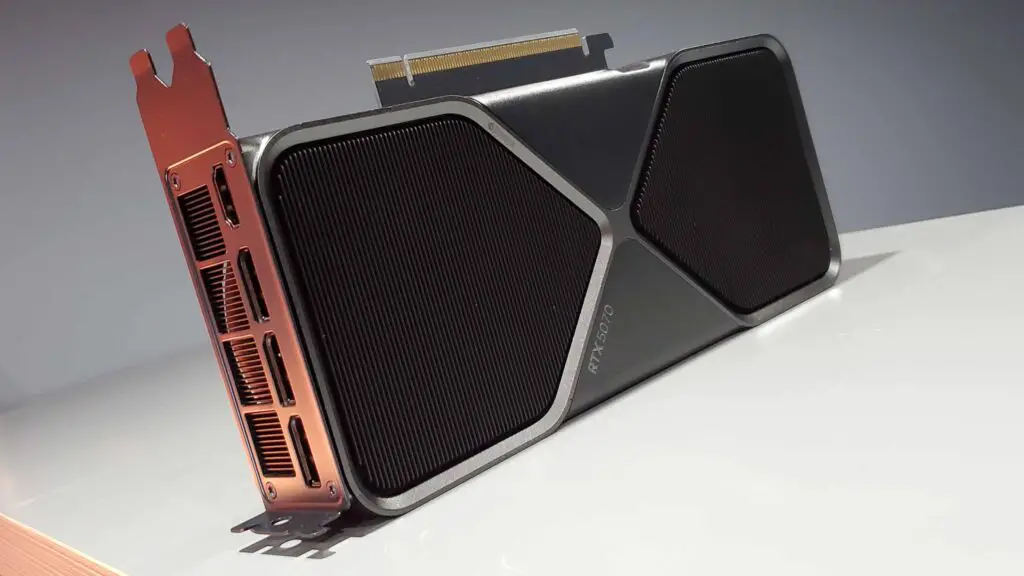

According to Micron, this technology enables up to 96 GB of graphics memory. This would theoretically provide GPUs with the overhead required for ultra-high-resolution textures, expansive open worlds, and complex machine learning tasks on AI PCs. However, for PC gamers, these numbers feel like a distant dream. Currently, the market is grappling with the reality of Nvidia reportedly scaling back production of more accessible 16 GB models, such as the RTX 5060 Ti and RTX 5070 Ti, due to supply constraints.

| Feature | Micron GDDR7 Specification | Impact on Gaming/AI |

|---|---|---|

| Density | 24 GB per chip | Enables massive total VRAM pools (up to 96 GB) |

| Primary Focus | High-Resolution Textures | Reduces texture “pop-in” and stuttering |

| Secondary Focus | AI Acceleration | Improves on-device Large Language Model (LLM) performance |

| Target Market | Next-Gen Enthusiast/Server | Bridges the gap between consumer gaming and professional AI |

Why Memory Scale is the Real Frontier

From a storytelling perspective, Micron’s narrative about “cinematic quality” makes sense. Modern games are no longer just rendering shapes; they are simulating environments. Real-time ray tracing requires constant access to massive datasets involving geometry, lighting maps, and shadows. When GPU memory hits its limit, the system must swap assets, leading to uneven frame times and sudden performance drops.

As AI-assisted rendering techniques become standard, the data load on the GPU increases exponentially. Micron correctly identifies that traditional memory limits are the primary obstacle to achieving true “on-device AI” responsiveness. The technology is impressive, and for hardware enthusiasts, the prospect of 96 GB of VRAM is revolutionary.

The Strategic Disconnect

The tension here lies in the industry’s priorities. The “faster-growing segments” Micron refers to are almost exclusively tied to the AI boom. While they champion the benefits of high-capacity memory for gaming, their manufacturing focus has shifted toward the enterprise data centers that power blockchain and machine learning giants.

For the community here at Digital Tech Explorer, we see this as a pivotal moment in PC gaming history. We are being told what we need for the “future” of play, while the components necessary to reach that future are increasingly funneled into server racks instead of consumer rigs. Micron’s technical roadmap is visionary, but until that GDDR7 technology is readily available in consumer-grade GPUs at a reasonable price, it remains a fascinating story rather than a practical reality.

Stay tuned to Digital Tech Explorer for further updates on 2024 releases and the latest in GPU innovations. For more insights into digital trends, visit TechTalesLeo’s author page.