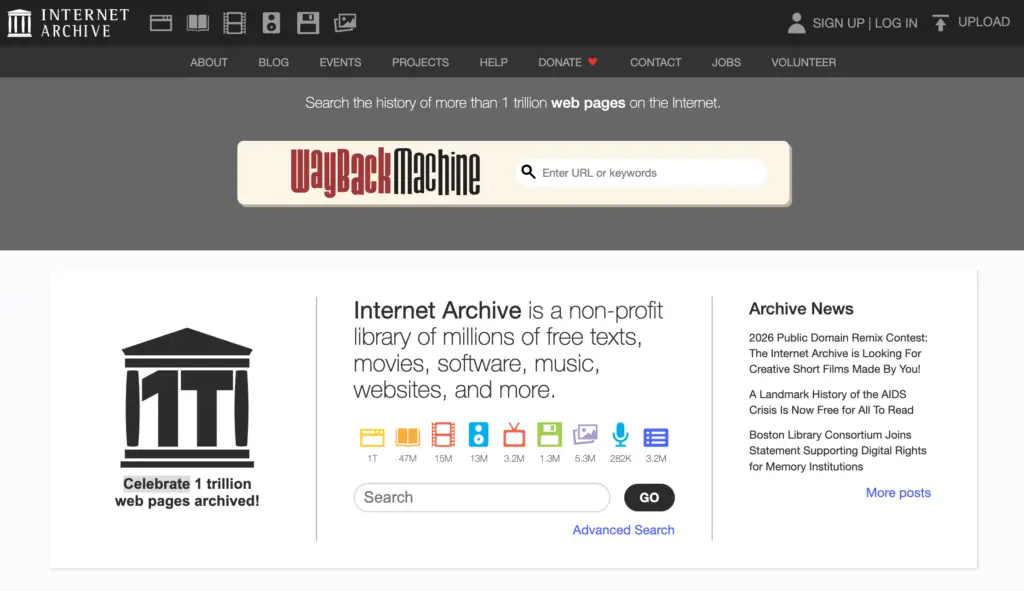

The digital landscape is currently witnessing a significant clash between the preservation of our online history and the protection of intellectual property. The Internet Archive, the non-profit organization behind the indispensable Wayback Machine, has found itself increasingly locked out of major platforms. Tech giants and media powerhouses like Reddit, The New York Times, and The Guardian have implemented access blocks, primarily citing the rising threat of AI scraping as their justification.

As we track these shifts here at Digital Tech Explorer, it becomes clear that the tension between data accessibility and corporate security is reaching a breaking point. Mark Graham, the director of the Internet Archive, has recently addressed these restrictions, asserting that while the anxieties regarding AI are understandable, they are ultimately “unfounded” when applied to archival missions.

The Internet Archive’s Defense Against AI Scraping

In a detailed commentary featured on TechDirt, Graham clarified the Archive’s operational philosophy. He emphasized that the Wayback Machine is fundamentally “built for human readers,” not for the automated consumption of large-language models or AI bots. To protect the integrity of its repository, the Archive has deployed sophisticated technical safeguards designed to distinguish between genuine researchers and malicious scrapers.

From a technical perspective, the Archive utilizes a multi-layered defense strategy to maintain service stability and prevent mass data harvesting:

| Protection Layer | Description | Target Objective |

|---|---|---|

| Rate Limiting | Restricts the number of requests a single IP can make within a specific timeframe. | Prevents high-speed automated harvesting. |

| Advanced Filtering | Identifies and blocks known malicious bot signatures and scraping patterns. | Ensures bandwidth is reserved for human users. |

| Active Monitoring | Real-time analysis of traffic spikes and unusual behavior. | Rapid response to new abuse methods. |

Despite these rigorous measures, many publishers remain unconvinced, leading to a broader debate about the future of the open web.

The High Cost of Blocking Digital History

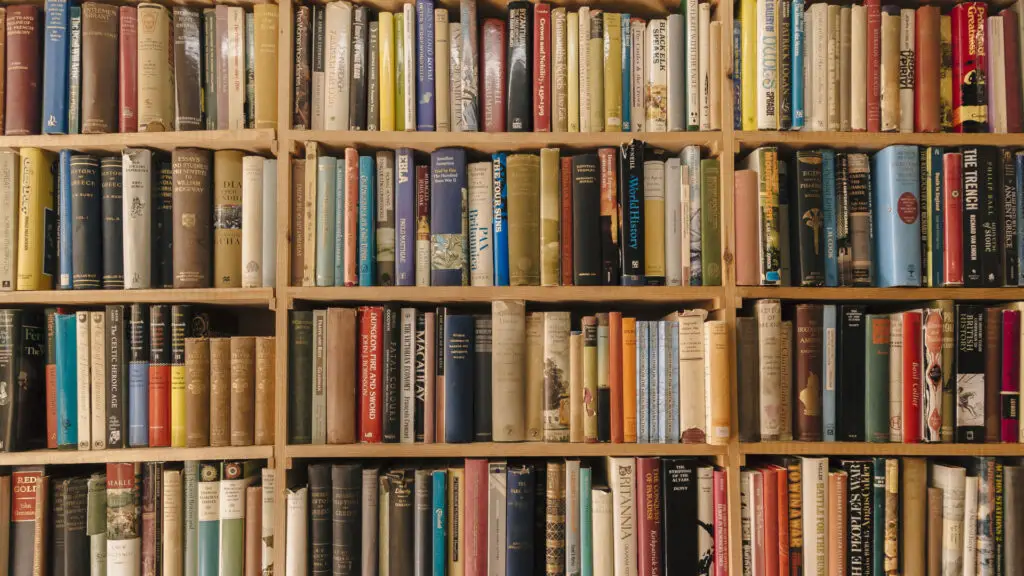

The consequences of these blocks extend far beyond a simple technical dispute. TechTalesLeo views this as a critical moment for digital storytelling; if we lose the ability to look back, we lose the ability to hold the present accountable. Graham argues that blocking archives is not a viable solution to the AI problem and warns of “serious harm to the public record.”

When major publications and social platforms opt out of archiving, the following risks emerge:

- Loss of Accountability: Journalists and developers lose the ability to verify past statements or track changes in public discourse.

- Evidence Erosion: Researchers lose vital primary source material that documents the evolution of software and society.

- Historical Fragility: The web becomes fragmented, making it easier for information to be altered or deleted without a trace.

The Intersection of Preservation and Revenue

While AI scraping is the headline concern, there is an underlying economic tension at play. For subscription-based outlets like The New York Times, archival tools are sometimes viewed through the lens of revenue protection. There is a persistent fear that these tools could be used to circumvent paywalls, though the Internet Archive maintains its focus is strictly on preservation rather than enabling piracy.

This creates a complex dilemma for the tech community. Developers and tech enthusiasts rely on the Wayback Machine for documentation and historical context, yet content creators need sustainable models to survive. As the landscape of AI acceleration continues to shift, finding a balance between protecting intellectual property and maintaining a transparent historical record will be one of the most significant challenges of our era.

At Digital Tech Explorer, we believe that transparency and research are paramount. The loss of digital history is a price too high to pay for security measures that could be solved through better technical collaboration rather than outright exclusion.