In the fast-paced world of digital innovation, benchmark transparency is the cornerstone of trust for developers and enthusiasts alike. Recently, Primate Labs, the architects behind the industry-standard Geekbench, sent ripples through the tech community by announcing a formal warning for benchmark results produced by Intel’s new Arrow Lake Plus CPUs. The point of contention? Intel’s new Binary Optimization Tool (BOT).

At Digital Tech Explorer, we believe in deep-diving into these technical shifts to help you stay ahead of the curve. According to Primate Labs, this tool—exclusive to the freshly launched Arrow Lake Plus and upcoming Panther Lake silicon—can artificially inflate Geekbench 6 scores by up to 8% overall, with specific workloads seeing a staggering 40% jump. Because these optimizations happen “under the hood” in a way that alters standard benchmark behavior, Primate Labs has deemed these results non-comparable to standard runs.

The core of the issue lies in detection. Primate Labs admits they currently lack the telemetry to distinguish if a result was generated with or without the Binary Optimization Tool active. Consequently, a blanket warning will now appear on all Arrow Lake Plus benchmarks: “This benchmark result may be invalid due to binary modification tools that can run on this system.”

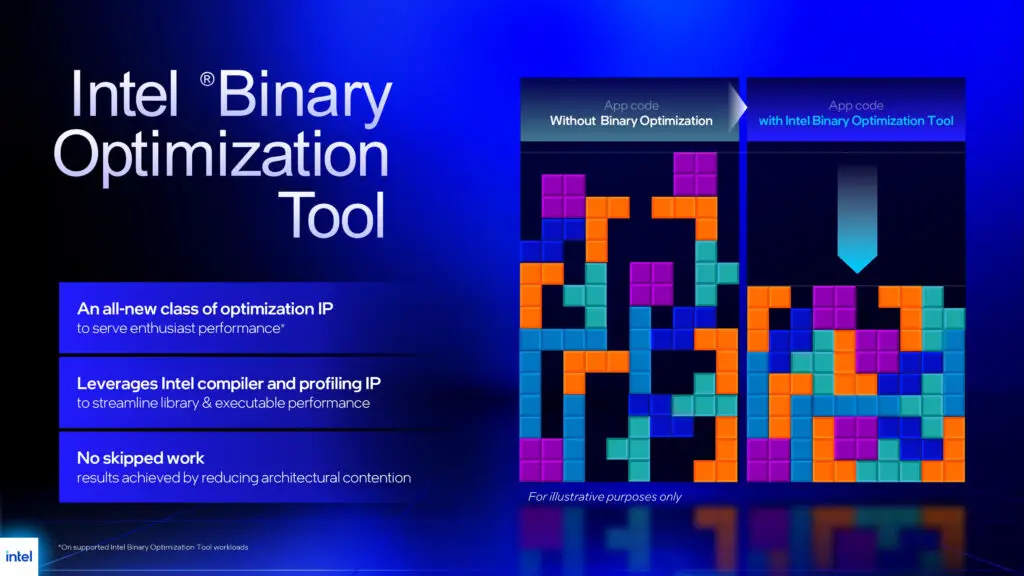

What is Intel’s BOT and Why the Controversy?

As a storyteller in the tech space, I find the mechanics of BOT fascinating. Essentially, the tool re-orders software instructions to perfectly align with the Arrow Lake Plus pipeline. It doesn’t “skip” work or cheat the math; rather, it ensures the CPU’s micro-architecture is utilized at maximum efficiency. However, because BOT requires application-specific profiles created by Intel, it isn’t a universal performance boost for every piece of software you own.

To provide a clearer picture of the impact, here is a breakdown of how the Binary Optimization Tool influences performance metrics:

| Metric | Estimated Impact | Implication |

|---|---|---|

| Average Geekbench 6 Multi-Core Score | Up to 8% Increase | Results are no longer comparable to standard CPU architectures. |

| Specific Workload Peaks | Up to 40% Increase | Highlights potential of BOT for optimized professional software. |

| Compatibility | Profile-Dependent | Only works on software Intel has specifically analyzed. |

A Legacy of Benchmark Optimization

This isn’t the first time we’ve seen tension between hardware manufacturers and benchmarking bodies. At Digital Tech Explorer, we often look at the history of digital trends to understand the present. Back in 2009, Intel faced scrutiny when its ICC compiler was found to disadvantage AMD architectures. More recently in 2024, SPEC invalidated thousands of Intel results due to “unfair” optimizations that didn’t reflect real-world performance.

The concern today is that tools like BOT could trigger a “benchmark arms race.” If every manufacturer releases proprietary tools to boost scores in specific tests, benchmarks lose their value as a neutral ground for comparing different GPU and CPU configurations.

Final Thoughts: A Move Toward Transparency

While the Binary Optimization Tool represents an impressive feat of engineering for AI acceleration and pipeline efficiency, Primate Labs’ decision to flag these results is a win for transparency. For the professional developer or the hardcore gamer, knowing whether a score represents “out-of-the-box” performance or a highly tuned profile is essential for making informed purchasing decisions.

As we continue to monitor the evolution of Intel’s supported CPU list and Geekbench’s detection methods, stay tuned to Digital Tech Explorer for the latest updates on how these changes affect your digital toolkit.

For more insights into the latest hardware releases, check out our coverage on 2024 tech trends and our deep dives into PC gaming performance.