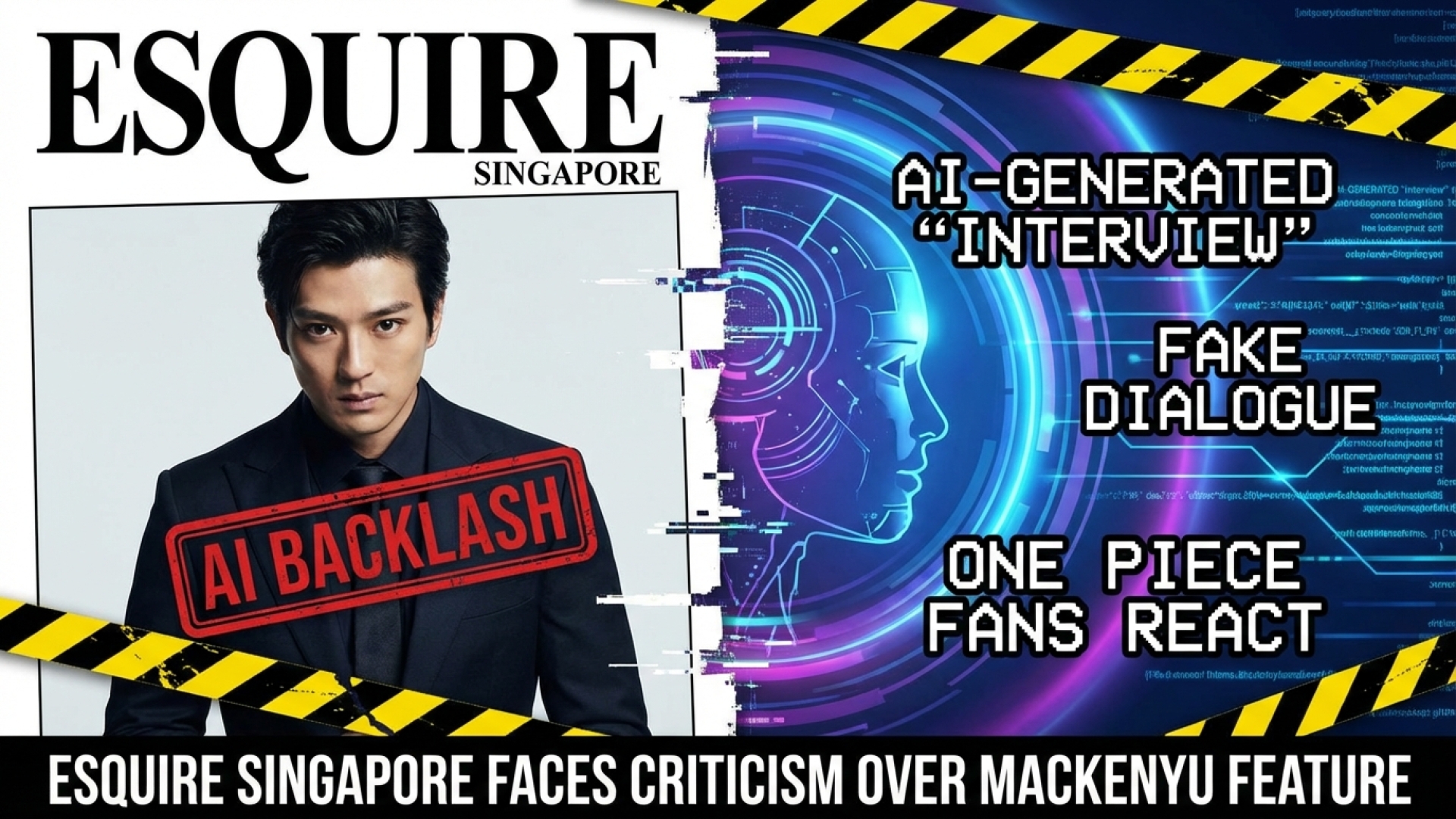

In an era where artificial intelligence is rapidly blurring the lines between authentic human experience and digital simulation, a recent controversy involving Esquire Singapore and actor Mackenyu Arata has ignited a firestorm within the tech and journalism communities. At Digital Tech Explorer, we prioritize transparency and real-world testing, but this specific case highlights a worrying trend where “creative license” overrides journalistic integrity.

The Simulation of a Star: How the AI Interview Happened

The feature, titled “Mackenyu in Resonance,” was published in March and initially presented as an “unprecedented interview” with the One Piece star. However, it wasn’t long before the tech-savvy audience at Kotaku and beyond noticed something uncanny. The writer, Joy Ling, eventually revealed that the entire interaction was a construct. Because Mackenyu’s schedule was reportedly too packed for a sit-down, the publication decided to feed his previous verbatim responses into AI models to “formulate” a new conversation.

To pull off this digital feat, the team utilized AI tools like Claude and Microsoft Copilot. While these programs are leaders in AI acceleration, using them to simulate a father-son dynamic or personal “disillusionment” felt hollow to many readers. The output included strange, artificial cues like “(laughs),” which many critics described as “unsubstantial meandering” rather than a meaningful dialogue.

The Tools of the Trade

For those interested in the technical side of how this content was synthesized, here is a breakdown of the primary tools involved in the Esquire controversy:

| Tool Name | Primary Function | Role in Incident |

|---|---|---|

| Claude (Anthropic) | Large Language Model (LLM) | Used to process verbatim responses and generate conversational flows. |

| Microsoft Copilot | AI Productivity Assistant | Assisted in formulating and refining the “interview” responses. |

| Creative License | Editorial Strategy | Used as a justification for replacing a human interview with synthetic data. |

Journalistic Integrity vs. Digital Innovation

The backlash was swift. Industry veterans like Nicole Clark took to social media to condemn the practice, arguing that presenting AI-generated content as a genuine interview is a form of misinformation. The sentiment was echoed by the broader public, with some users describing the piece as “fan fiction” rather than journalism. The core of the anger stems from the breach of trust; readers expect a human connection when a publication promises a celebrity feature.

At Digital Tech Explorer, we often cover how machine learning can enhance creativity, but there is a clear line between using AI as a tool and using it as a replacement for human presence. This incident mirrors other recent ethical lapses in the industry, such as companies cloning the voices or writing styles of journalists without consent.

The Future of Content Authenticity

This “golden age of the AI-operated sock puppet” raises significant questions for the future of digital media. As a storyteller who bridges the gap between complex technology and everyday usability, I believe we must demand more from our publishers. Whether it is blockchain verification for content or simply more rigorous editorial standards, the industry needs a roadmap to navigate the ethical pitfalls of generative AI.

The Esquire incident serves as a cautionary tale. While the technology behind AI is impressive, it cannot replicate the nuance, emotion, and unpredictability of a real human conversation. As we move forward into 2024 and beyond, the value of “real-world testing” and authentic reporting will only increase in a sea of synthetic voices.

For more insights into digital innovation and the latest tech trends, stay tuned to Digital Tech Explorer.

Disclaimer: All content on Digital Tech Explorer is for informational and entertainment purposes only. Some of the links on our site are affiliate links, which help support our research and testing at no additional cost to you.