In the rapidly evolving landscape of AI technology, the spotlight usually shines on the newest, most powerful hardware hitting the market. However, the reality within data centers tells a different story. As we’ve observed here at Digital Tech Explorer, staying ahead of tech trends often means understanding the value of legacy systems. Brannin McBee, CoreWeave’s co-founder and chief development officer (CDO), recently shared insights on the tech talk show TBPN that confirm a surprising trend: older GPUs are not just surviving; they are thriving.

Why Legacy GPUs are Seeing Price Hikes

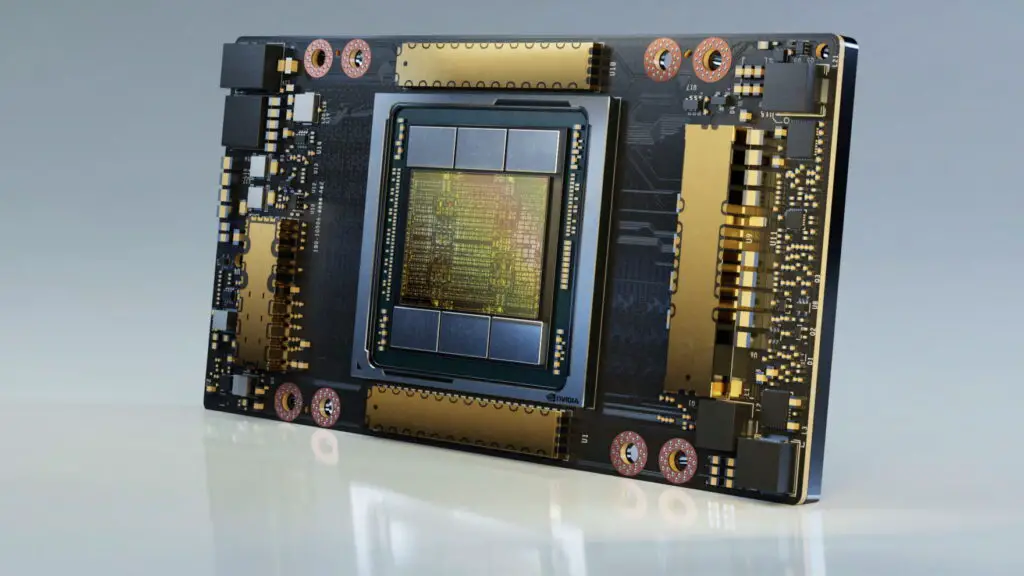

According to McBee, “late 2010s SKUs” remain both prevalent and highly profitable in cloud environments. Interestingly, while the pricing for the cutting-edge Hopper generation GPUs has remained relatively stable, the cost for Ampere generation GPUs is projected to increase through 2025. This pricing anomaly highlights a sustained reliance on previous-generation AI acceleration hardware, even as newer architectures like Blackwell begin to roll out.

The Enduring Demand for Ampere Architecture

The Ampere architecture, launched around 2020, is well-known to the gaming community via the RTX 30-series. In the enterprise world, the Nvidia A100 GPU became the workhorse of the initial generative AI boom. McBee emphasizes that there is “incredibly robust demand” for these older units because not every AI workload requires maximum compute power.

For many AI inference tasks—the process of running a trained model to make predictions—the A100 provides a perfect balance of cost and performance. This market efficiency ensures that older GPUs are matched with appropriate tasks, preventing overkill and keeping these older chips in high demand.

Comparing Nvidia GPU Generations for AI

To help our community of developers and enthusiasts understand the current hardware landscape, we’ve broken down the key generations currently fueling the industry:

| Architecture | Primary Enterprise GPU | Key Use Case | Market Status |

|---|---|---|---|

| Ampere | Nvidia A100 | AI Inference & Mid-range Training | High Demand / Rising Prices |

| Hopper | Nvidia H100 / H200 | Large Language Model (LLM) Training | Stable Pricing / High Adoption |

| Blackwell | Nvidia GB200 / B100 | Next-Gen Generative AI & Trillion-Parameter Models | Rolling Out / Supply Constrained |

| Rubin | Vera Rubin Superchip | Future-Proofing AI Compute | Expected Late 2025/2026 |

Market Dynamics: Why “Old” is the New “Gold”

The massive capital flowing into the AI sector has created a “take what you can get” mentality. While Nvidia’s newer Hopper and Blackwell chips offer superior efficiency, persistent supply constraints force companies to look backward to fill their server racks. This isn’t just an enterprise issue; as TechTalesLeo, I’ve noted how these global trends ripple down to consumer levels, impacting memory prices and availability for general tech enthusiasts.

Geopolitics also plays a role. With restricted access to the latest Blackwell chips in certain regions like China, the competition for available Hopper and Ampere stock has intensified. For developers and tech professionals, this underscores the importance of optimizing code for various hardware generations. At Digital Tech Explorer, we believe that understanding these shifts is crucial for making informed decisions, whether you’re scaling a startup’s server or upgrading your personal workstation.

Disclaimer: All content on Digital Tech Explorer is for informational and entertainment purposes only. Some of the links on our site are affiliate links, which help support our research and testing at no additional cost to you.