In the ever-evolving landscape of digital content, a significant challenge to creative expression and social discourse has emerged on Roblox. A powerful coalition comprising Women in Games, Out Making Games, and Black, Asian and Minority Ethnic Talent (BAME in Games) has united to publicly denounce the platform’s newly introduced “sensitive issues” label for community-created content. In a joint letter, these advocacy groups assert that this content filtering system constitutes “a step backward for both creative expression and social justice,” arguing that the policy fundamentally mischaracterizes issues of equality and human rights “as debatable rather than fundamental.”

Roblox’s Content Filtering Policy Details

This significant controversy originates from an August announcement by Roblox, outlining a new policy designed to restrict users under 13 from accessing specific content without explicit parental permission. The heart of the issue lies in the platform’s notably ambiguous definition of “sensitive issues.” This nebulous category is broadly described as “any current sensitive social, political, or religious issue” that potentially features “polarized viewpoints” or could evoke “a strong emotional response.” While Roblox attempted to offer some clarity by listing examples such as immigration, capital punishment, gun control, marriage equality, pay equity in sports, prayer in schools, racial profiling, affirmative action, vaccination policies, and reproductive rights, they explicitly stated this compilation is far from exhaustive. This vagueness raises concerns for developers and content creators navigating the platform’s guidelines.

Advocacy Groups’ Concerns and Rebuttals

The advocacy groups robustly challenged this stance in their letter, articulating a crucial point: “We support efforts to keep children safe online—especially girls, who face disproportionate harassment and grooming. But safety cannot be achieved by silencing content that educates and empowers.” They contended that far from safeguarding its younger audience, this policy carries the inherent risk of instilling the perception that fundamental issues of justice and equality are mere controversial opinions, rather than inherent universal values. This, they argue, could paradoxically exacerbate the very societal divisions the platform ostensibly seeks to mitigate, undermining its stated intentions.

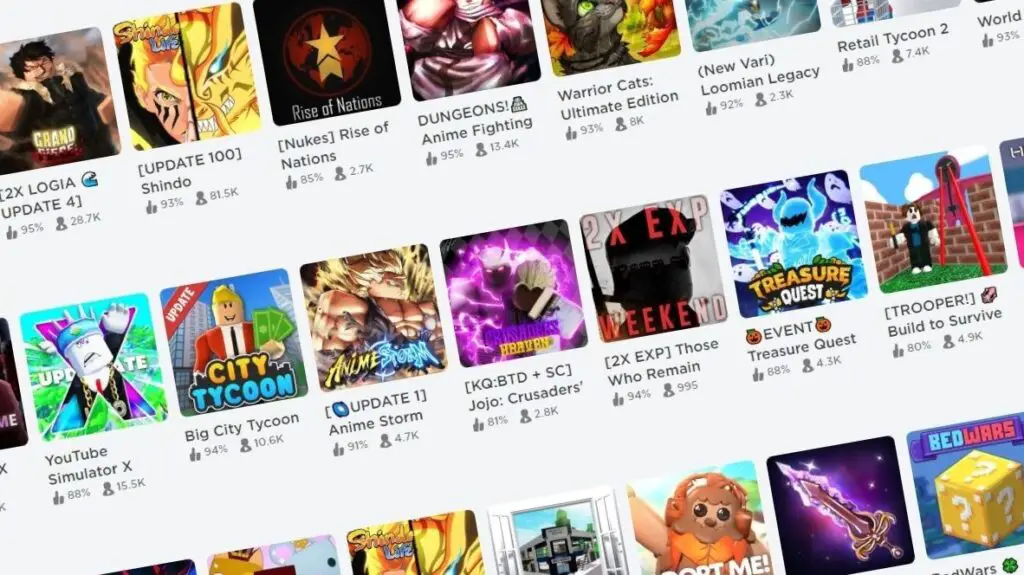

Indeed, the introduction of this new, highly subjective content filter invites scrutiny, especially when contrasted with Roblox‘s recent, more objective embrace of established age rating systems. The platform has, after all, only recently begun integrating official ESRB ratings into its experiences—a move that prioritizes clear, external guidelines. This strategic shift makes the simultaneous implementation of a separate, vaguely defined, and contentious label like “sensitive issues” appear unnecessarily convoluted. It effectively layers a subjective filtering mechanism atop existing, more transparent, and objective frameworks, prompting questions about clarity and consistency in content moderation strategies within the evolving digital landscape.